elastic search自定义分析器

这里我们定义两个字段分别用n-gram与ik两种分词器。n-gram可以分成最小最大的用法,也可指定分词器,我们这里指定使用snowball。

准备索引,删除以前的

DELETE /book2

1.定义索引

PUT book2

{

"settings": {

"analysis": {

"filter": {

"autocomplete_filter": {

"type": "edge_ngram",

"min_gram": 1,

"max_gram": 20

}

},

"analyzer": {

"autocomplete": {

"type": "custom",

"tokenizer": "standard",

"filter": [

"lowercase",

"snowball"

]

}

}

}

},

"mappings": {

"properties": {

"name": {

"type": "text",

"analyzer": "autocomplete"

},

"title": {

"type": "text",

"analyzer": "ik_max_word"

}

}

}

}这里定义了索引book2,两个字段name与title,分别用snowball的n-gram分词器与ik分词器。

2.再放置三条记录(document)

PUT /book2/_doc/1

{

"age": 1,

"email": "1@com",

"name": "administrators",

"title": "中华人民共和国"

}

PUT /book2/_doc/2

{

"age": 2,

"email": "2@com",

"name": "admin",

"title": "中华人民"

}

PUT /book2/_doc/3

{

"age": 3,

"email": "3@com",

"name": "administr",

"title": "中华"

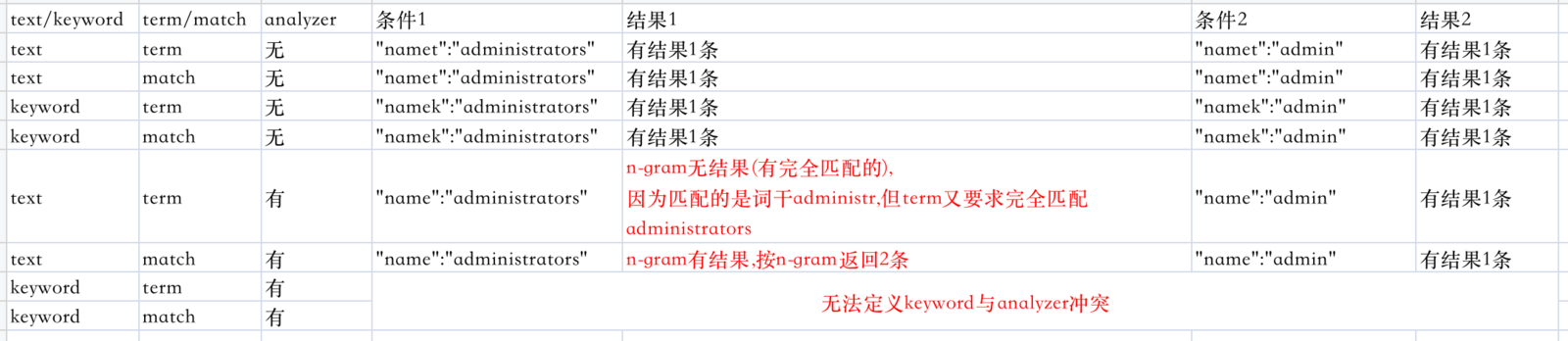

}然后我们分别用term与match来进行查询:

GET /book2/_search

{

"explain": true,

"query": {

"bool":{

"must": { "match_all": {} },

"filter": {

"term":{

"name":"administrators"

}

}

}

}

}GET /book2/_search

{

"explain": true,

"query": {

"bool":{

"must": { "match_all": {} },

"filter": {

"match":{

"name":"administrators"

}

}

}

}

}这时会发现term查询有些问题,本来administrators应该能精确匹配的,但没查出数据来。这时去掉mapping里定义的分词,就可以用term查询了。3.最后,用一个bool查询两个字段都需满足条件的。

用match,没有问题。

换成term,相当于like '%xx%'

GET /book2/_search

{

"explain": true,

"query": {

"bool":{

"must": { "match_all": {} },

"filter": {

"bool":{

"must":[

{

"match":{

"title":"人民"

}

},

{

"match":{

"name":"administrators"

}

}

]

}

}

}

}

}试验结果:

相关阅读

评论:

↓ 广告开始-头部带绿为生活 ↓

↑ 广告结束-尾部支持多点击 ↑